The Rise, Fall and Revival of AMD

AMD is one of the oldest designers of large scale microprocessors and has been the subject of polarizing debate among technology enthusiasts for nearly 50 years. Its story makes for a thrilling tale -- filled with heroic successes, foolhardy errors, and a close shave with rack and ruin. Where other semiconductor firms have come and gone, AMD has weathered many storms and fought numerous battles, in boardrooms, courts, and stores.

In this feature we'll revisit the visitor's past, examine the twists and turns in the path to the present, and wonder at what lies alee for this Silicon Valley veteran.

The rise to fame and fortune

To begin our story, we need to roll dorsum the years and head for America and the late 1950s. Thriving after the hard years of World State of war II, this was the fourth dimension and place to be if you wanted experience the forefront of technological innovation.

Companies such every bit Bell Laboratories, Texas Instruments, and Fairchild Semiconductor employed the very best engineers, and churned out numerous firsts: the bipolar junction transistor, the integrated circuit, and the MOSFET (metal oxide semiconductor field effect transistor).

These young technicians wanted to research and develop ever more heady products, but with cautious senior managers mindful of the times when the earth was fearful and unstable, frustration amongst the engineers build a desire to strike out alone.

And so, in 1968, ii employees of Fairchild Semiconductor, Robert Noyce and Gordon Moore, left the company and forged their own path. N M Electronics opened its doors in that summer, to be renamed only weeks subsequently as Integrated Electronics -- Intel, for short.

Others followed suit and less than a yr later, another 8 people left and together they set up their own electronics pattern and manufacturing company: Avant-garde Micro Devices (AMD, naturally).

The group was headed by Jerry Sanders, Fairchild'southward former manager of marketing, They began by redesigning parts from Fairchild and National Semiconductor rather than trying to compete directly with the likes of Intel, Motorola, and IBM (who spent pregnant sums of money on inquiry and evolution of new integrated circuits).

From these apprehensive beginnings, and headquartered in Silicon Valley, AMD offered products that boasted increased efficiency, stress tolerances, and speed within a few months. These microchips were designed to comply with US military quality standards, which proved a considerable advantage in the still-immature computer manufacture, where reliability and production consistency varied greatly.

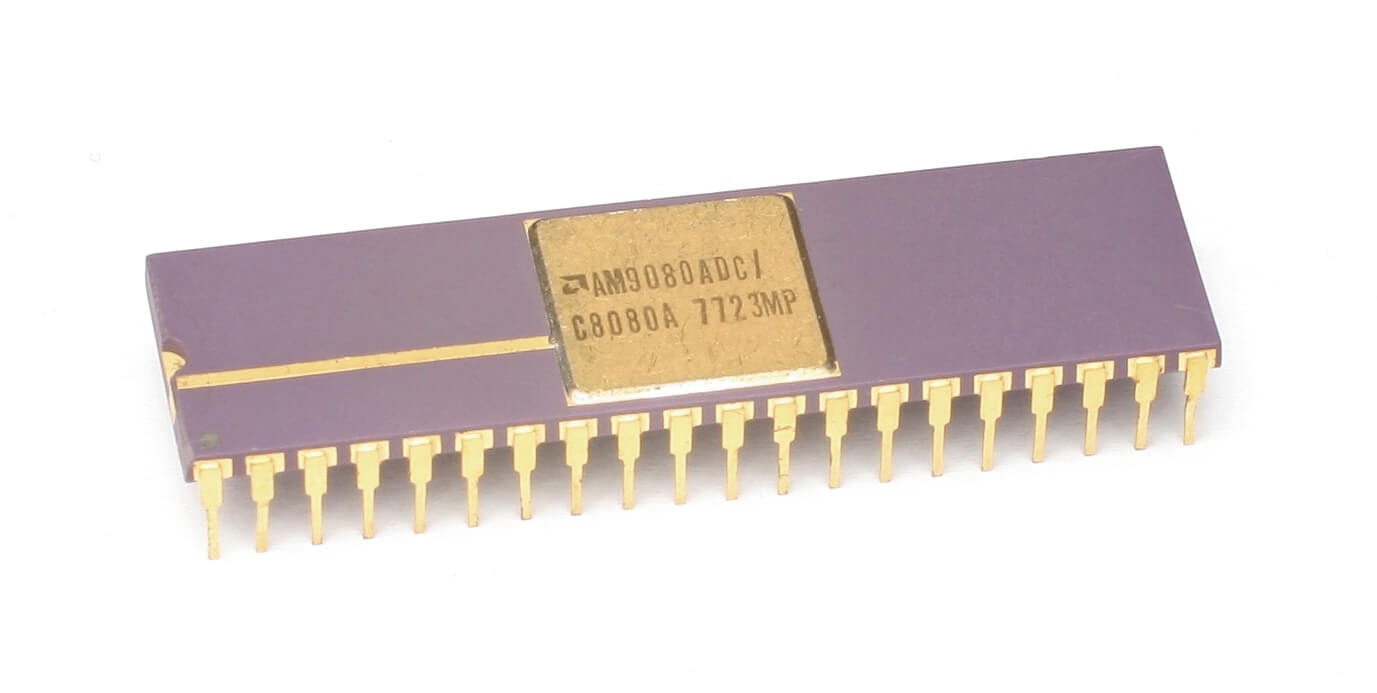

By the time Intel released their get-go viii-bit microprocessor (the 8008) in 1974, AMD was a public company with a portfolio of over 200 products -- a quarter of which were their own designs, including RAM chips, logic counters, and scrap shifters. The post-obit yr saw a raft of new models: their own Am2900 integrated circuit (IC) family and the 2 MHz eight-bit Am9080, a reverse-engineered copy of Intel'southward successor to the 8008. The former was a collection of components that are at present fully integrated in CPUs and GPUs, but 35 years agone, arithmetics logic units and memory controllers were all separate fries.

The blatant plagiarism of Intel'southward design might seem to exist somewhat shocking by today'southward standards, but it was par for the course in the fledgling days of microchips.

The breathy plagiarism of Intel's pattern might seem to be somewhat shocking past today'due south standards, but information technology was par for the course in the fledgling days of microchips. The CPU clone was eventually renamed as the 8080A, later AMD and Intel signed a cross-licensing agreement in 1976. You'd imagine this would toll a pretty penny or ii, but it was merely $325,000 ($i.65 million in today'south dollars).

The deal allowed AMD and Intel to flood the market with ridiculously profitable chips, retailing at just over $350 or twice that for 'military' purchases. The 8085 (3 MHz) processor followed in 1977, and was soon joined past the 8086 (viii MHz). In 1979 also saw product begin at AMD's Austin, Texas facility.

When IBM began moving from mainframe systems into so-called personal computers (PCs) in 1982, the outfit decided to outsource parts rather than develop processors in-house. Intel'south 8086, the showtime always x86 processor, was chosen with the express stipulation that AMD acted equally a secondary source to guarantee a constant supply for IBM's PC/AT.

A contract between AMD and Intel was signed in Feb of that year, with the former producing 8086, 8088, 80186, and 80188 processors -- not just for IBM, but for the many IBM clones that proliferated (Compaq existence just 1 of them). AMD likewise started manufacturing the 16-flake Intel 80286, badged as the Am286, towards the end of 1982.

This was to become the first truly significant desktop PC processor, and while Intel'due south models generally ranged from 6 to 10 MHz, AMD's started at 8 MHz and went as loftier as twenty MHz. This undoubtedly marked the start of the battle for CPU potency between the two Silicon Valley powerhouses; what Intel designed, AMD simply tried to make better.

This period represented a huge growth of the fledgling PC market, and noting that AMD had offered the Am286 with a significant speed boost over the 80286, Intel attempted to stop AMD in its tracks. This was washed by excluding them from gaining a licence for the next generation 386 processors.

AMD sued, just arbitration took four and a half years to consummate, and while the judgment plant that Intel was not obligated to transfer every new product to AMD, it was determined that the larger chipmaker had breached an implied covenant of good faith.

Intel's licence deprival occurred during a critical period, correct every bit IBM PC's market was ballooning from 55% to 84%. Left without access to new processor specifications, AMD took over v years to reverse-engineer the 80386 into the Am386. Once completed, it proved in one case more to exist more than a lucifer for Intel's model. Where the original 386 debuted at only 12 MHz in 1985, and afterwards managed to reach 33 MHz, the summit-end version of the Am386DX launched in 1989 at forty MHz.

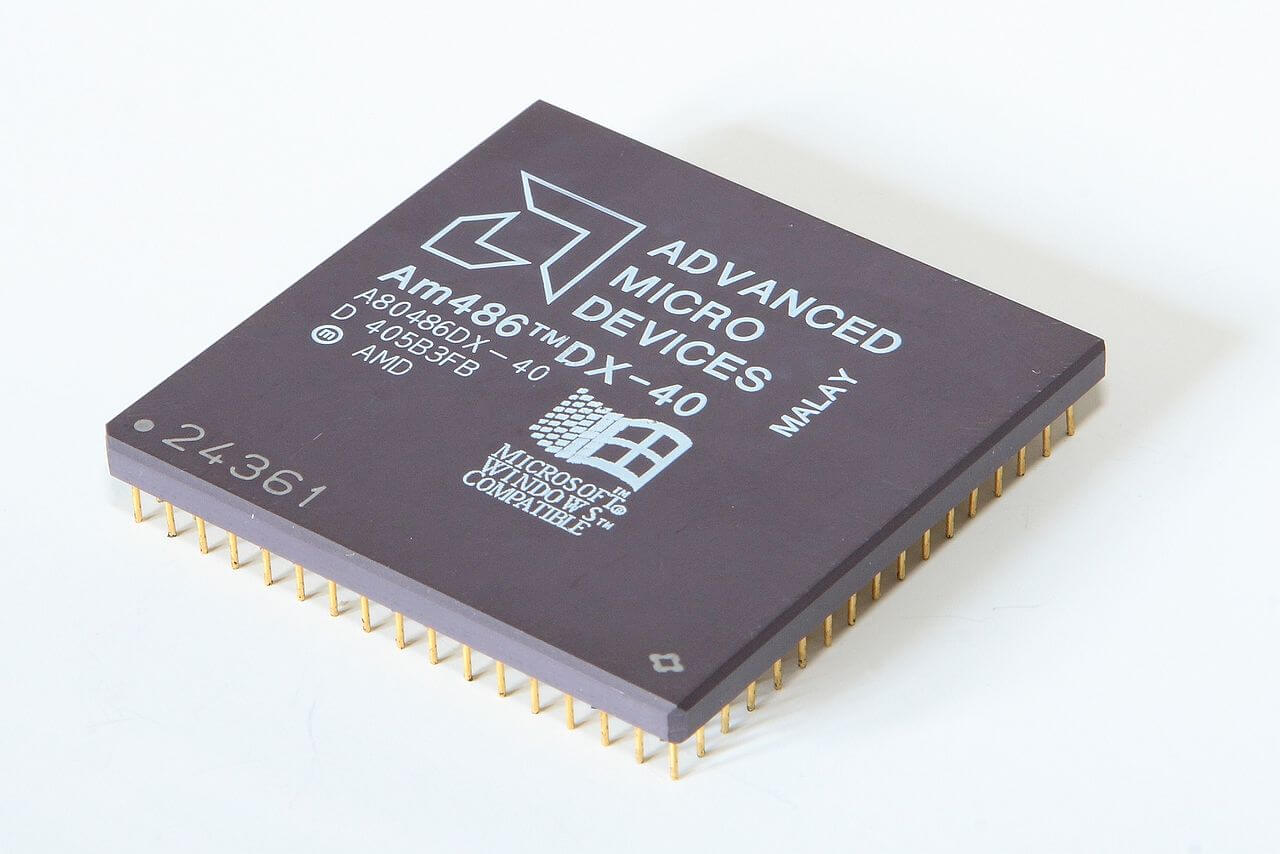

The Am386's success was followed past the release of 1993's highly competitive forty MHz Am486, which offered roughly 20% more performance than Intel's 33 MHz i486 for the same price. This was to be replicated throughout the entire 486 line upward, and while Intel'southward 486DX topped out at 100 MHz, AMD offered (somewhat predictably at this stage) a snappier 120 MHz option. To better illustrate AMD's good fortune in this menstruation, the company'due south revenue doubled from just over $1 billion in 1990 to well over $2 billion in 1994.

In 1995, AMD introduced the Am5x86 processor as a successor to the 486, offering information technology as a direct upgrade for older computers.

In 1995, AMD introduced the Am5x86 processor as a successor to the 486, offering information technology every bit a direct upgrade for older computers. The Am5x86 P75+ boasted a 150 Mhz frequency, with the 'P75' referencing performance that was similar to Intel's Pentium 75. The '+' signified that the AMD chip was slightly faster at integer math than the competition.

To counter this, Intel altered its naming conventions to distance itself from products past its rival and other vendors. The Am5x86 generated significant revenue for AMD, both from new sales and for upgrades from 486 machines. Equally with the Am286, 386 and 486, AMD continued to extend the market telescopic of the parts by offering them as embedded solutions.

March 1996 saw the introduction of its kickoff processor, developed entirely by AMD's own engineers: the 5k86, afterward renamed to the K5. The flake was designed to compete with the Intel Pentium and Cyrix 6x86, and a potent execution of the project was pivotal to AMD -- the chip was expected to have a much more than powerful floating point unit than Cyrix'southward and virtually equal to the Pentium 100, while the integer performance targeted the Pentium 200.

Ultimately, it was a missed opportunity, as the project was dogged with blueprint and manufacturing issues. These resulted in the CPU non meeting frequency and functioning goals, and it arrived belatedly to market, causing information technology to suffer poor sales.

Past this time, AMD had spent $857 million in stock on NexGen, a minor fabless chip (pattern-merely) visitor whose processors were made past IBM. AMD's K5 and the developmental K6 had scaling issues at higher clock speeds (~150 MHz and in a higher place) while NexGen's Nx686 had already demonstrated a 180 MHz core speed. After the buyout, the Nx686 became AMD'south K6 and the evolution of the original bit was consigned to the scrapyard.

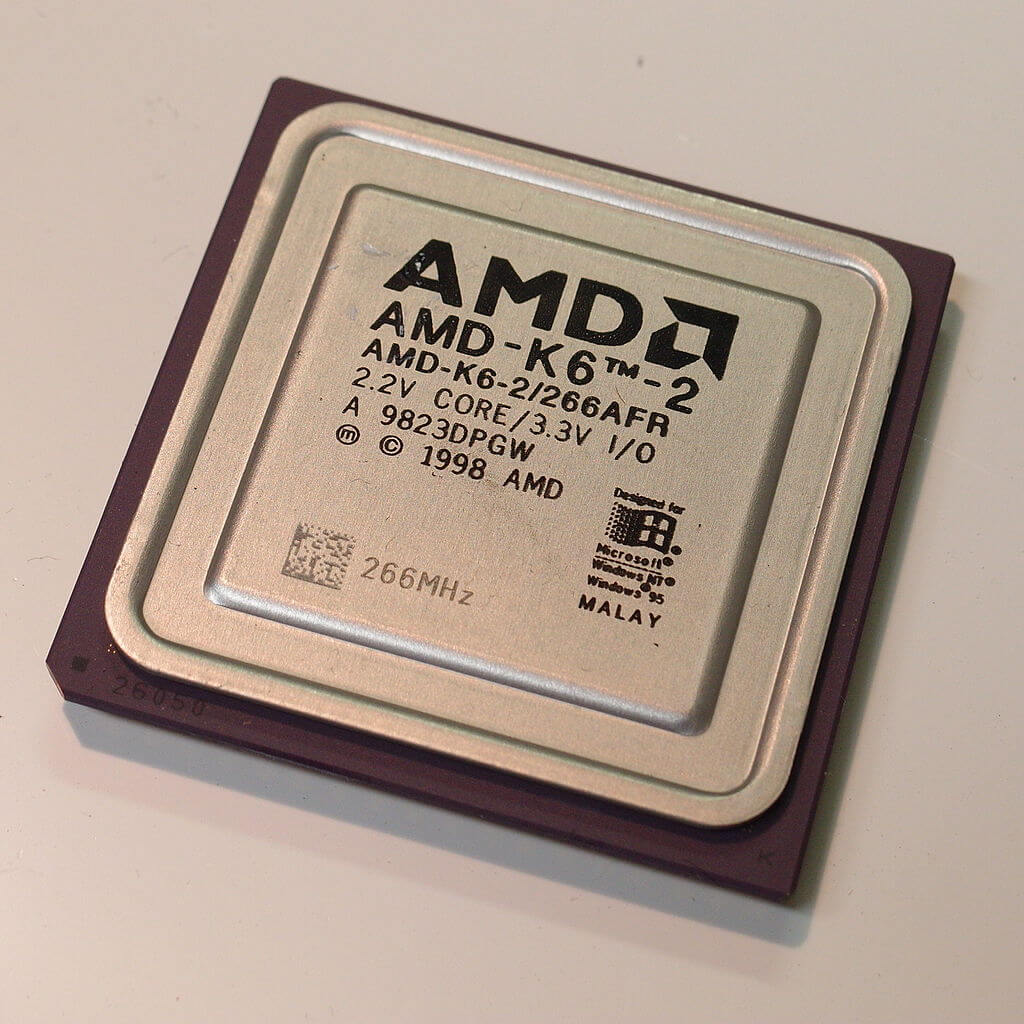

The K6-2 introduced AMD'due south 3DNow! SIMD (single instruction, multiple data) didactics set.

AMD's rise reflected Intel'due south decline, from the early beginnings of the K6 architecture, which was pitted against Intel'due south Pentium, Pentium II and (largely rebadged) Pentium Three. The K6 produced a quickening of AMD's success, owing its existence and capabilities to an ex-Intel employee, Vinod Dham (a.grand.a. the "Begetter of Pentium"), who left Intel in 1995 to work at NexGen.

When the K6 hitting shelves in 1997, it represented a feasible alternative to the Pentium MMX. The K6 went from strength to strength -- from a 233 MHz speed in the initial stepping, to 300 MHz for the "Picayune Foot" revision in January 1998, 350 MHz in the "Chomper" K6-2 of May 1998, and an astonishing 550 MHz in September 1998 with the "Chomper Extended" revision.

The K6-ii introduced AMD's 3DNow! SIMD (unmarried pedagogy, multiple data) teaching set. Essentially the same as Intel's SSE, it offered an easier route accessing the CPU's floating signal capabilities; the downside to this being that programmers needed to incorporate the new education in any new code, in addition to patches and compilers needing to be rewritten to use the feature.

Like the initial K6, the K6-two represented much amend value than the competition, often costing half as much as Intel'south Pentium chips. The last iteration of the K6, the K6-III, was a more complicated CPU, and the transistor count at present stood at 21.iv 1000000 -- upwards from 8.8 million in the first K6, and 9.4 million for the K6-Ii.

It incorporated AMD's PowerNow!, which dynamically contradistinct clock speeds according to workload. With clock speeds eventually reaching 570MHz, the K6-III was adequately expensive to produce and had a relatively short life span cut short by the arrival of the K7 which was improve suited to compete with the Pentium III and beyond.

1999 was the zenith of AMD's gold age -- the arrival of the K7 processor, branded Athlon, showed that they were truly no longer the cheap, copycat option.

Starting at 500 MHz, Athlon CPUs utilized the new Slot A (EV6) and a new internal organisation charabanc licensed from DEC that operated at 200MHz, eclipsing the 133MHz Intel offered at the time. June 2000 brought the Athlon Thunderbird, a CPU cherished by many for its overclockability, which incorporated DDR RAM support and a full speed Level two on-die cache.

Thunderbird and its successors (Palomino, Thoroughbred, Barton, and Thorton), battled Intel's Pentium four throughout the outset 5 years of the millennium, commonly at a lower toll bespeak merely e'er with better operation. Athlon was upgraded in September 2003 with the K8 (codenamed ClawHammer), better known equally the Athlon 64, because information technology added a 64-bit extension to the x86 instruction set.

This episode is usually cited as AMD's defining moment. While it was was surging ahead, the MHz-at-any-cost approach of Intel'due south Netburst architecture was existence exposed equally a classic example of a developmental dead end.

Revenue and operating income were both excellent for such a relatively small company. While not Intel levels of income, AMD was flush with success and hungry for more than. But when yous're at the very meridian of the tallest of mountains, it takes every ounce of try to stay there -- otherwise, at that place's only mode to go.

Paradise Lost

There is no single event responsible for AMD tumbling from its lofty position. A global economic system crisis, internal mismanagement, poor financial predictions, a victim of its ain success, the fortunes and misdeeds of Intel -- these all played a part, in some mode or some other.

Merely allow's first seeing how matters were in early 2006. The CPU market was replete with offerings from both AMD and Intel, simply the old had the likes of the exceptional K8-based Athlon 64 FX series. The FX-60 was a dual-core 2.6 GHz, whereas the FX-57 was single core, but ran at 2.8 GHz.

Both were head-and-shoulders above anything else, as shown by reviews at the time. They were hugely expensive, with the FX-60 retailing at over $one,000, but then was Intel'southward creme-de-la-creme, the 3.46 GHz Pentium Extreme Edition 955. AMD seemed to have the upper hand in the workstation/server marketplace as well, with Opteron chips outperforming Intel's Xeon processors.

The problem for Intel was their Netburst architecture -- the ultra-deep pipeline structure required very loftier clock speeds to be competitive, which in plough increased power consumption and estrus output. The design had reached its limits and was no longer up to scratch, so Intel ditched its development and turned to their older Pentium Pro/Pentium M CPU compages to build a successor for the Pentium four.

The initiative first produced the Yonah design for mobile platforms and then the dual-core Conroe architecture for desktops, in August 2006. Such was Intel'due south need to save face that they relegated the Pentium name to low-cease budget models and replaced it with Core -- thirteen years of brand authorization swept away in an instant.

The move to a depression-power, loftier-throughput bit design wound up being ideally suited to a multitude of evolving markets and virtually overnight, Intel took the performance crown in the mainstream and enthusiast sectors. Past the end of 2006, AMD had been firmly pushed from the CPU summit, merely it was a disastrous managerial decision that pushed them correct down the gradient.

3 days before Intel launched the Core 2 Duo, AMD made public a motion that had been fully canonical by and so-CEO Hector Ruiz (Sanders had retired iv years earlier). On July 24 2006, AMD announced that it intended to learn the graphics card manufacturer ATI Technologies, in a bargain worth $five.four billion (comprising $4.3 billion in cash and loans, and $1.1 billion raised from 58 million shares). The deal was a huge financial gamble, representing l% of AMD's market capitalization at the fourth dimension, and while the buy fabricated sense, the price absolutely did not.

Imageon, the handheld graphics division of ATI, was sold to Qualcomm in a paltry $65 million deal. That division is now named Adreno, an anagram of "Radeon" and an integral component of the Snapdragon SoC

ATI was grossly overvalued, every bit it wasn't (nor was Nvidia) pulling in anything shut to that kind of revenue. ATI had no manufacturing sites, either -- it'due south worth was well-nigh entirely based on intellectual property.

AMD somewhen acknowledged this mistake when it captivated $ii.65 billion in write-downs due to overestimating ATI's goodwill valuation.

To chemical compound the lack of management foresight, Imageon, the handheld graphics segmentation of ATI, was sold to Qualcomm in a paltry $65 meg deal.That sectionalisation is now named Adreno, an anagram of "Radeon" and an integral component of the Snapdragon SoC (!).

Xilleon, a 32-bit SoC for Digital Goggle box and Television set cable boxes, was shipped off to Broadcom for $192.8 1000000.

In improver to called-for money, AMD's eventual response to Intel'south refreshed compages was distinctly underwhelming. Two weeks after Core ii'due south release, AMD'south President and COO, Dirk Meyer, announced the finalization of AMD's new K10 Barcelona processor. This would be their decisive move in the server market, as it was a fully-fledged quad core CPU, whereas at the time, Intel only produced dual cadre Xeon chips.

The new Opteron chip appeared in September 2007, to much fanfare, simply instead of stealing Intel's thunder, the political party officially halted with the discovery of a problems that in rare circumstances could effect in lockups when involving nested cache writes. Rare or not, the TLB bug put a stop to AMD's K10 production; in the concurrently, a BIOS patch that cured the trouble on outgoing processors, would practise so at the loss of roughly 10% performance. By the fourth dimension the revised 'B3 stepping' CPUs shipped 6 months later, the impairment had been done, both for sales and reputation.

A year afterward, near the end of 2007, AMD brought the quad-cadre K10 blueprint to the desktop marketplace. By and so, Intel was forging alee and had released the now-famous Core 2 Quad Q6600. On newspaper, the K10 was the superior design -- all four cores were in the same dice, unlike the Q6600 which used two separate dies on the same packet. However, AMD was struggling to hit expected clock speeds, and the best version of the new CPU was only ii.3 GHz. That was slower, albeit by 100 MHz, than the Q6600, but information technology was besides a lilliputian more than expensive.

Just the most puzzling aspect of it all was AMD's decision to come out with a new model proper noun: Phenom. Intel switched to Core considering Pentium had become synonymous with excessive cost and ability, and having relatively poor performance. On the other manus, Athlon was a proper name that computing enthusiasts knew all as well well and it had the speed to match its reputation. The starting time version of Phenom wasn't really bad -- it only wasn't as proficient as the Core 2 Quad Q6600, a product that was already readily available, plus Intel had faster offerings on the market place, also.

Bizarrely, AMD seemed to make a witting endeavour to abstain from advert. They besides had zero presence on the software side of the business; a very curious manner to run a business organisation, let alone 1 fighting in the semiconductor trade. But no review of this era in AMD'due south history would exist consummate without taking into consideration Intel's anti-competitive deeds. At this juncture, AMD was not only fighting Intel'due south chips, merely also the company'south monopolistic activities, which included paying OEMs large sums of money -- billions in full -- to actively keep AMD CPUs out of new computers.

In the first quarter of 2007, Intel paid Dell $723 meg to remain the sole provider of its processors and chipsets -- accounting for 76% of the company's total operating income of $949 million. AMD would afterward win a $i.25 billion settlement in the matter, surprisingly low on the surface, just probably exacerbated by the fact that at the time of Intel'south shenanigans, AMD itself couldn't actually supply enough CPUs to its existing customers.

Not that Intel needed to exercise any of this. Dissimilar AMD, they had rigid long-term goal setting, as well as greater product and IP diversity. They also had greenbacks reserves like no i else: by the stop of the first decade in the new millenium, Intel was pulling in over $twoscore billion in revenue and $15 billion in operating income. This provided huge budgets for marketing, inquiry and software evolution, every bit well as foundries uniquely tailored to its ain products and timetable. Those factors lone ensured AMD struggled for market share.

A multi-billion dollar overpayment for ATI and bellboy loan interest, a disappointing successor to the K8, and problematic chips arriving late to market, were all biting pills to consume. But matters were about to get worse.

One pace frontward, i sideways, any number back

Past 2022, the global economic system was struggling to rebound from the fiscal crunch of 2008. AMD had ejected its wink memory section a few years before, along with all its bit making foundries -- they ultimately became GlobalFoundries, which AMD however uses for some of its products. Roughly 10% of its workforce had been dropped, and all together the savings and cash injection meant that AMD could knuckle down and focus entirely on processor design.

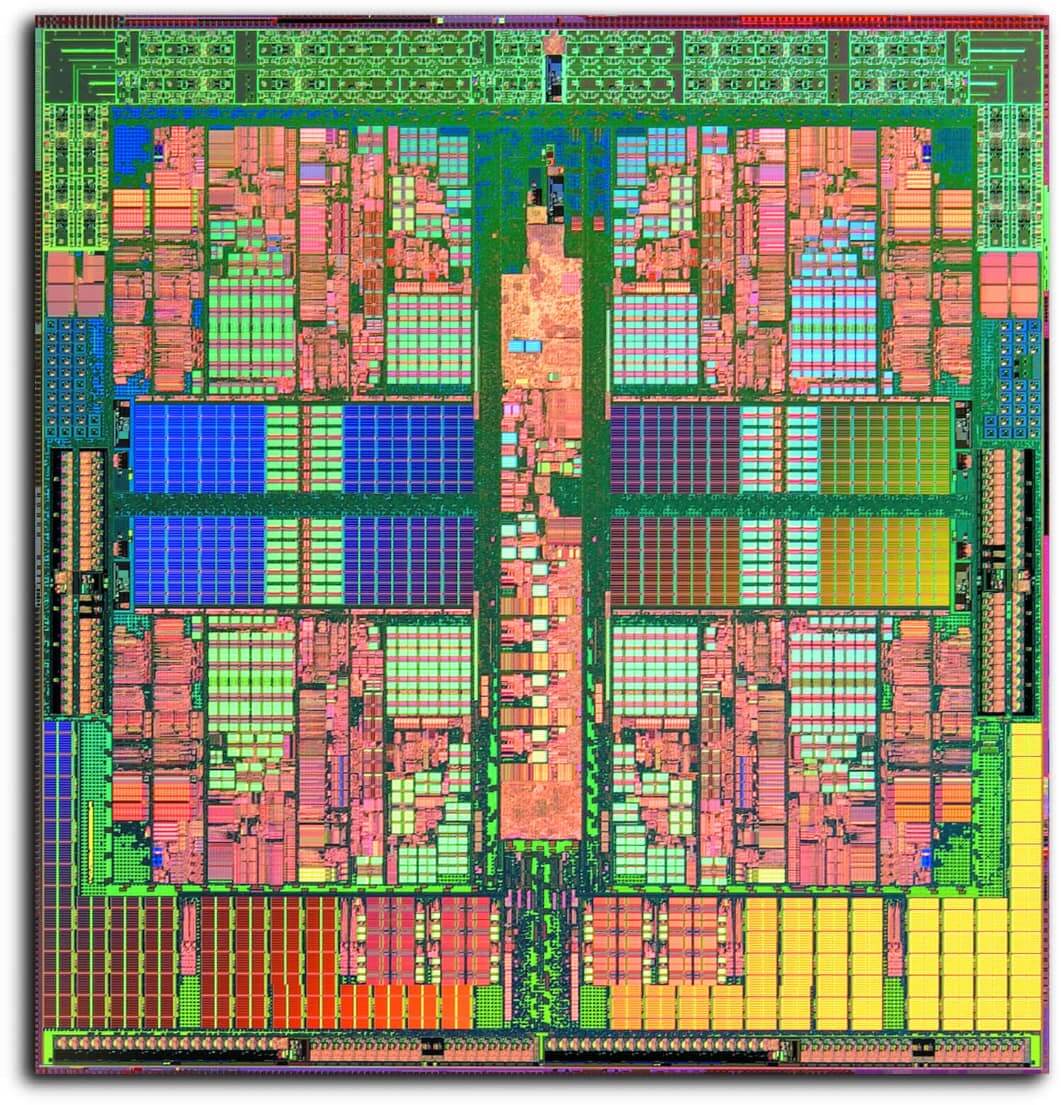

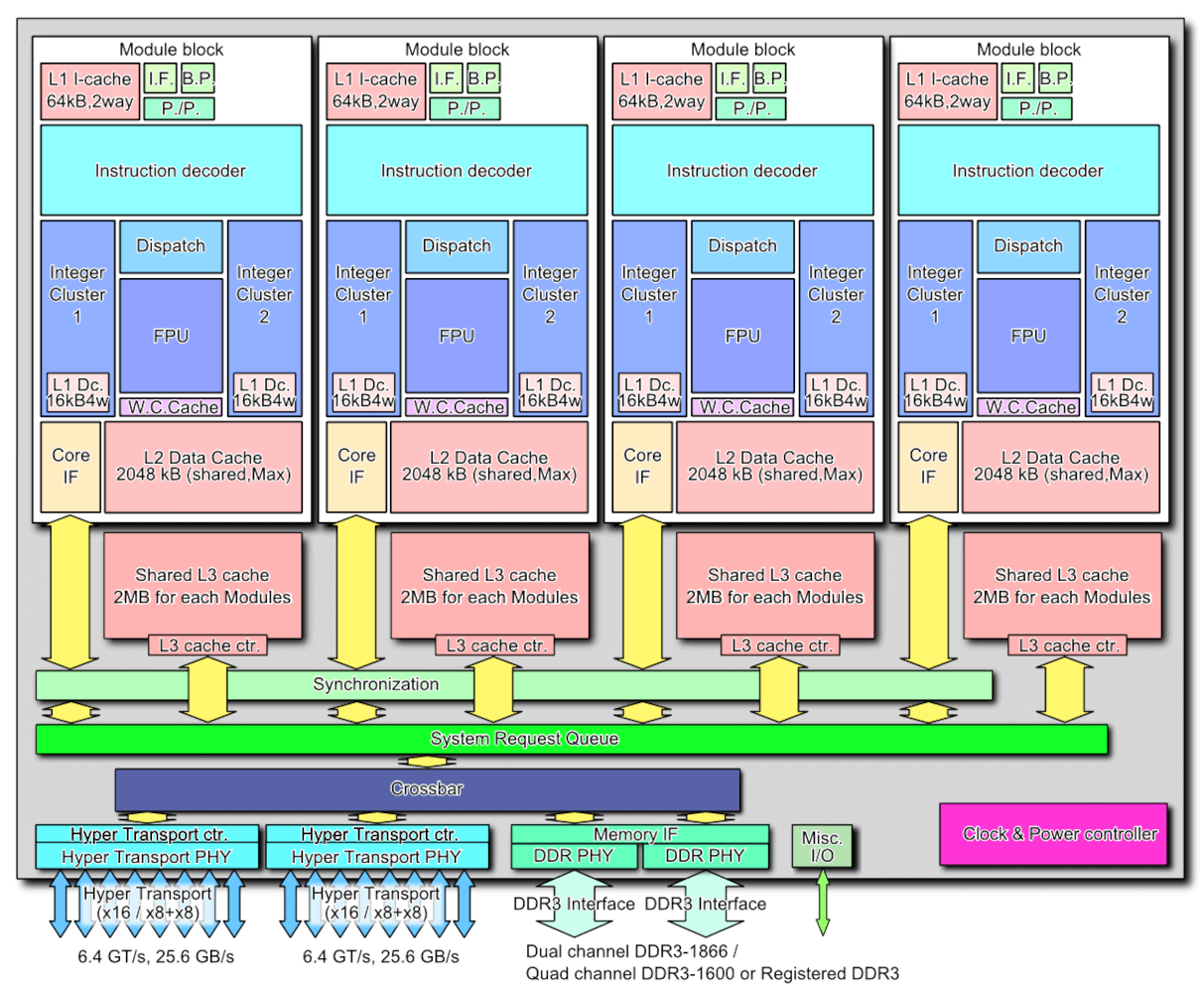

Rather than improving the K10 pattern, AMD started afresh with a new structure, and towards the cease of 2022, the Bulldozer compages was launched. Where K8 and K10 were true multicore, simultaneous multithreaded (SMT) processors, the new layout was classed equally existence 'clustered multithreading.'

AMD took a shared, modular approach with Bulldozer -- each cluster (or module) contained 2 integer processing cores, but they weren't totally independent. They shared the L1 instruction and L2 information caches, the fetch/decode, and the floating point unit of measurement. AMD fifty-fifty went every bit far as to drop the Phenom proper noun and hark back to their glory days of the Athlon FX, by but naming the first Bulldozer CPUs every bit AMD FX.

The idea backside all of these changes was to reduce the overall size of the chips and brand them more ability efficient. Smaller dies would improve fabrication yields, leading to better margins, and the increase in efficiency would help to boost clock speeds. The scalable pattern would besides make it suitable for a wider range of markets.

The all-time model in the Oct 2022 launch, the FX-8510, sported 4 clusters but was marketed as an eight core, 8 thread CPU. By this era, processors had multiple clock speeds, and the FX-8150 base frequency was 3.6 GHz, with a turbo clock of four.2 GHz. However, the fleck was 315 square mm in size and had a acme power consumption of over 125 W. Intel had already released the Core i7-2600K: it was a traditional 4 core, 8 thread CPU, running at up to 3.eight GHz. It was significantly smaller than the new AMD chip, at 216 square mm, and used thirty Due west less ability.

On paper, the new FX should have dominated, but its functioning was somewhat underwhelming -- at times, the ability to handle lots of threads would shine through, but single threaded performance was often no amend than the Phenom range it was set to supervene upon, despite the superior clock speeds.

Having invested millions of dollars into Bulldozer'due south R&D, AMD was certainly not going to carelessness the pattern and the purchase of ATI was at present starting to bear fruit. In the previous decade, AMD'due south first foray into a combined CPU and GPU package, called Fusion, was late to market and disappointingly weak.

Simply the project provided AMD with the means with which to tackle another markets. Earlier in 2022, another new architecture had been released, called Bobcat.

Aimed at low power applications, such as embedded systems, tablets, and notebooks; it was also the polar opposite design to Bulldozer: only a handful of pipelines and nothing much else. Bobcat received a much needed update a few years later, into the Jaguar compages, and was selected by Microsoft and Sony to power the Xbox 1 and PlayStation 4 in 2022.

Although the profit margins would be relatively slim as consoles are typically built down to the lowest possible price, both platforms sold in the millions and this highlighted AMD's ability to create custom SoCs.

AMD'south Bobcat received an update into the Jaguar architecture, and was selected by Microsoft and Sony to power the Xbox One and PlayStation four in 2022.

AMD continued revising the Bulldozer blueprint over the years -- Piledriver came first and gave us the FX-9550 (a 220 West, five GHz monstrosity), but Steamroller and the terminal version, Excavator (launched in 2022, with products using it 4 years later), were more focused on reducing the ability consumption, rather than offer anything peculiarly new.

By then, the naming construction for CPUs had become confusing, to say the least. Phenom was long resigned to the history books, and FX was having a somewhat poor reputation. AMD abandoned that classification and just labelled their Excavator desktop CPUs every bit the A-series.

The graphics section of the visitor, fielding the Radeon products, had similarly mixed fortunes. AMD retained the ATI make name until 2022, swapping information technology to their own. They likewise completely rewrote the GPU compages created by ATI in late 2022, with the release of Graphics Cadre Next (GCN). This pattern would final for nearly 8 years, finding its way into consoles, desktop PCs, workstations and servers; it's nevertheless in use today as the integrated GPU in AMD'south and then-chosen APU processors.

GCN processors grew to have immense compute performance, but the structure wasn't the easiest to get the all-time out of it. The most powerful version AMD always fabricated, the Vega 20 GPU in the Radeon 7, boasted xiii.iv TFLOPs of processing power and 1024 GB/due south of bandwidth -- but in games, information technology just couldn't attain the same heights as the best from Nvidia.

Radeon products often came with a reputation for being hot, noisy, and very power hungry. The initial iteration of GCN, powering the HD 7970, required well over 200 Due west of power at total load -- only it was manufactured on a relatively big process node, TSMC's 28nm. By the time GCN had reached full maturity, in the form of the Vega x, the chips were being fabricated by GlobalFoundries on their 14nm node, but energy requirements were no improve with the likes of the Radeon RX Vega 64 consuming a maximum of about 300 W.

While AMD had decent production selection, they just weren't every bit good as they should have been, and they struggled to earn enough money.

| Financial Year | Revenue ($ billion) | Gross Margin | Operating Income ($ million) | Internet Income ($ million) |

| 2016 | iv.27 | 23% | -372 | -497 |

| 2015 | iv.00 | 27% | -481 | -660 |

| 2014 | v.51 | 33% | -155 | -403 |

| 2013 | v.30 | 37% | 103 | -83 |

| 2012 | 5.42 | 23% | -1060 | -1180 |

| 2011 | 6.57 | 45% | 368 | 491 |

By the finish of 2022, the company's balance sheet had taken a loss for 4 consecutive years (2012's financials were battered by a $700 million GlobalFoundries final write off). Debt was still high, fifty-fifty with the auction of its foundries and other branches, and not even the success of the system package in the Xbox and PlayStation provided enough help.

At face value, AMD looked to be in deep trouble.

New stars a-ryze

With naught left to sell and no sign of whatever large investments coming to save them, AMD could only do one affair: double-down and restructure. In 2022, they picked up two people who would come to play vital roles in the revival of the semiconductor company.

Jim Keller, the one-time lead architect for the K8 range, had returned after a thirteen year absence and set up about heading up two projects: ane an ARM-based blueprint for the server markets, the other a standard x86 architecture, with Mike Clark (lead designer of Bulldozer) beingness the main architect.

Joining him was Lisa Su, who had been Senior Vice President and General Managing director at Freescale Semiconductors. She took up the same position at AMD and is generally credited, along with then-President Rory Read, as being behind the visitor's move into markets beyond the PC, peculiarly consoles.

Two years later on Keller'south restoration in AMD's R&D department, CEO Rory Read stepped downwardly and the SVP/GM moved upwardly. With a doctorate in electronic engineering science from MIT and having conducted enquiry into SOI (silicon-on-insulator) MOSFETS, Su had the academic background and the industrial experience needed to return AMD to its celebrity days. Just nothing happens overnight in the earth of large scale processors -- chip designs accept several years, at all-time, earlier they are fix for marketplace. AMD would have to ride the storm until such plans could come to fruition.

While AMD continued to struggle, Intel went from strength to strength. The Core architecture and fabrication process nodes had matured nicely, and at the stop of 2022, they posted a revenue of about $60 billion. For a number of years, Intel had been following a 'tick-tock' arroyo to processor development: a 'tick' would be a new architecture, whereas a 'tock' would be a process refinement, typically in the form of a smaller node.

Nonetheless, not all was well behind the scenes, despite the huge profits and about-total marketplace potency. In 2022, Intel expected to be releasing CPUs on a cutting-border 10nm node within three years. That particular tock never happened -- indeed, the clock never actually ticked, either. Their first 14nm CPU, using the Broadwell architecture, appeared in 2022 and the node and key design remained in place for half a decade.

The engineers at the foundries repeatedly hit yield issues with 10nm, forcing Intel to refine the older procedure and architecture each year. Clock speeds and ability consumption climbed ever college, only no new designs were forthcoming; an repeat, mayhap, of their Netburst days. PC customers were left with frustrating choices: choose something from the powerful Core line, but pay a hefty price, or choose the weaker and cheaper FX/A-series.

But AMD had been quietly building a winning set of cards and played their hand in February 2022, at the annual E3 upshot. Using the eagerly awaited Doom reboot as the announcement platform, the completely new Zen architecture was revealed to the public.

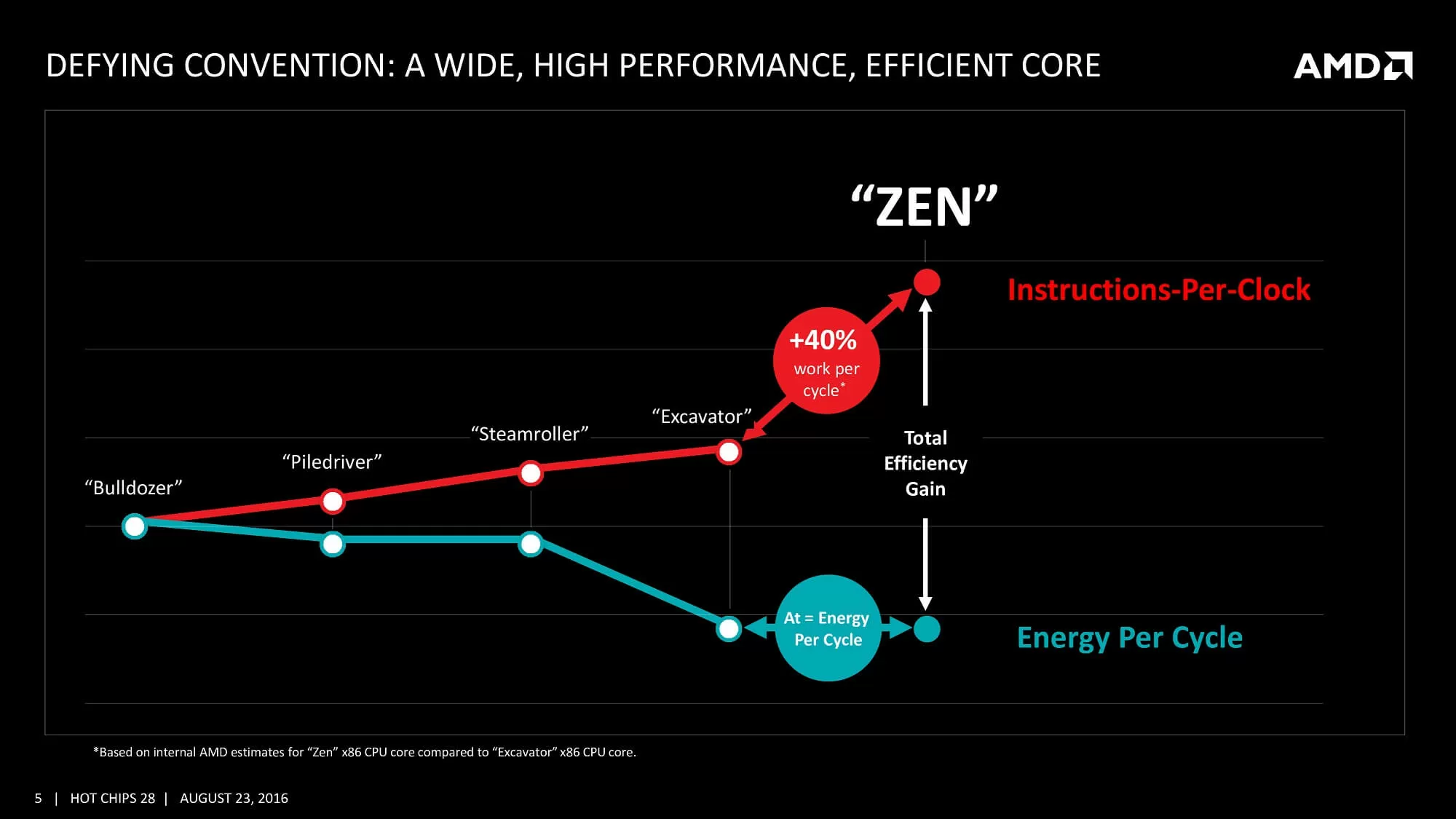

Very lilliputian was said nigh the fresh design besides phrases such every bit 'simultaneous multithreading', 'high bandwidth cache,' and 'energy efficient finFET design.' More details were given during Computex 2022, including a target of a twoscore% improvement over the Excavator architecture.

To say this was ambitious would exist an understatement

To say this was aggressive would exist an understatement -- peculiarly in light of the fact that AMD had delivered pocket-sized 10% increases with each revision of the Bulldozer design, at best.

It would have them another 12 months before the fleck actually appeared, simply when it did, AMD's long-stewing plan was finally clear.

Any new hardware design needs the right software to sell it, but multi-threaded CPUs were facing an uphill battle. Despite consoles sporting eight-cadre CPUs, virtually games were still perfectly fine with merely 4. The main reasons were Intel'south market dominance and the design of AMD's chip in the Xbox One and PlayStation 4. The former had released their start 6-core CPU back in 2022, only it was hugely expensive (nearly $i,100). Others rapidly appeared, but it would be another vii years before Intel offered a truly affordable hexa-cadre processor, the Core i5-8400, at under $200.

The issue with console processors was that the CPU layout consisted of ii 4-core CPUs in the same dice, and there was high latency between the two sections of the fleck. So game developers tended to keep the engine's threads located on one of the sections, and just apply the other for general groundwork processes. Only in the globe of workstations and servers there was a demand for seriously multi-threaded CPUs -- until AMD decided otherwise.

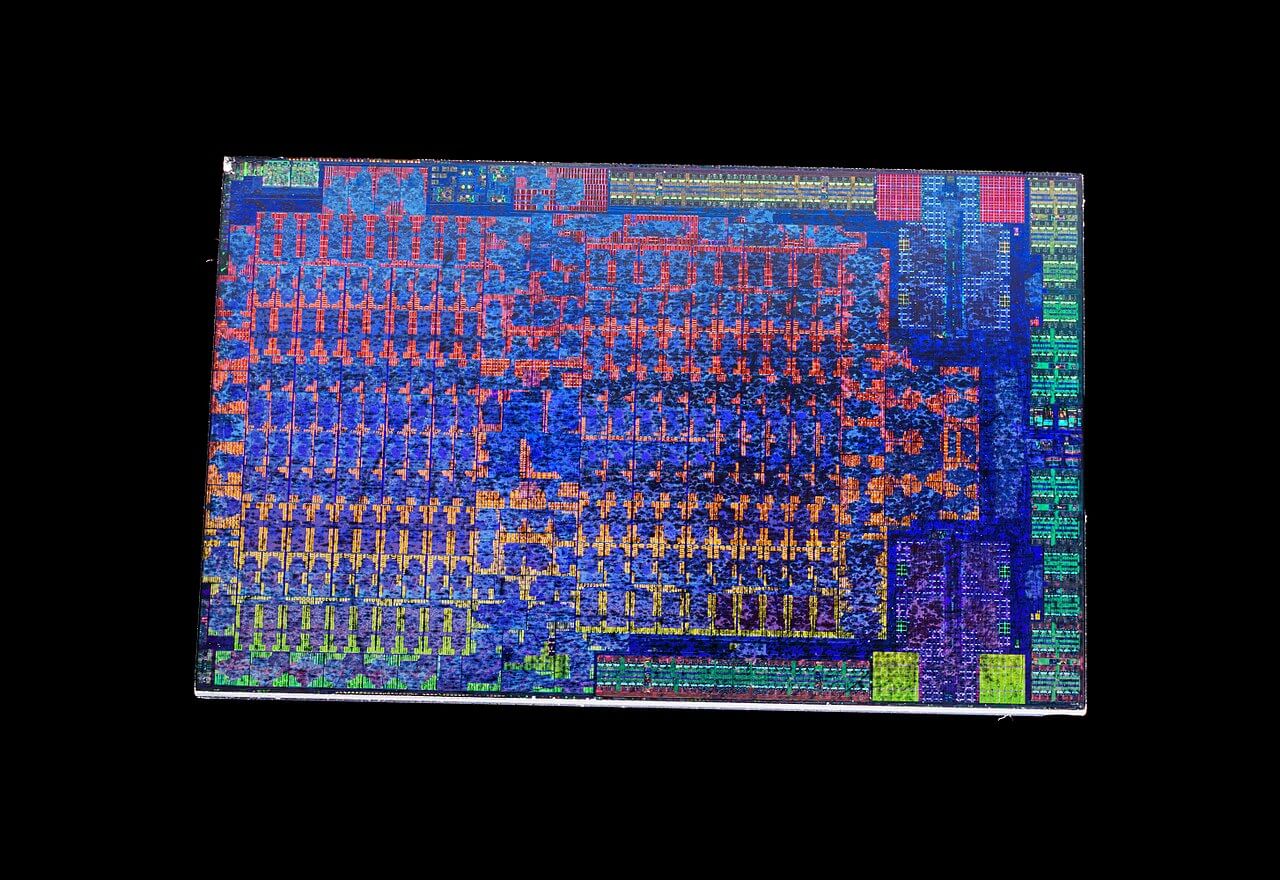

In March 2022, general desktop users could upgrade their systems with 1 of two viii-core,16-thread CPUs. A completely new architecture clearly deserved a new name, and AMD cast off Phenom and FX, to give us Ryzen.

Neither CPU was particularly cheap: the 3.6 GHz (four GHz boost) Ryzen 7 1800X retailed at $500, with the 0.2 GHz slower 1700X selling for $100 less. In function, AMD was keen to stop the perception of being the budget choice, but it was mostly because Intel was charging over $one,000 for their 8-core offering, the Core i7-6900K.

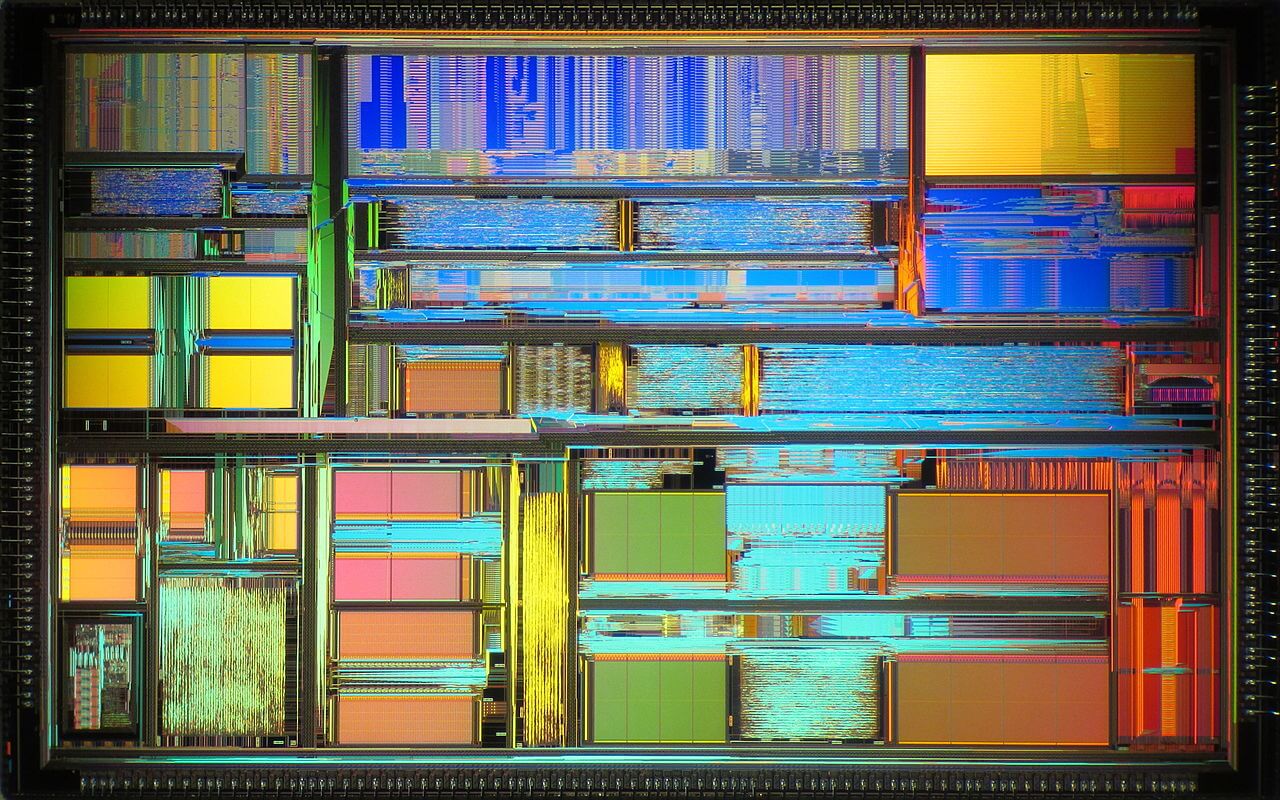

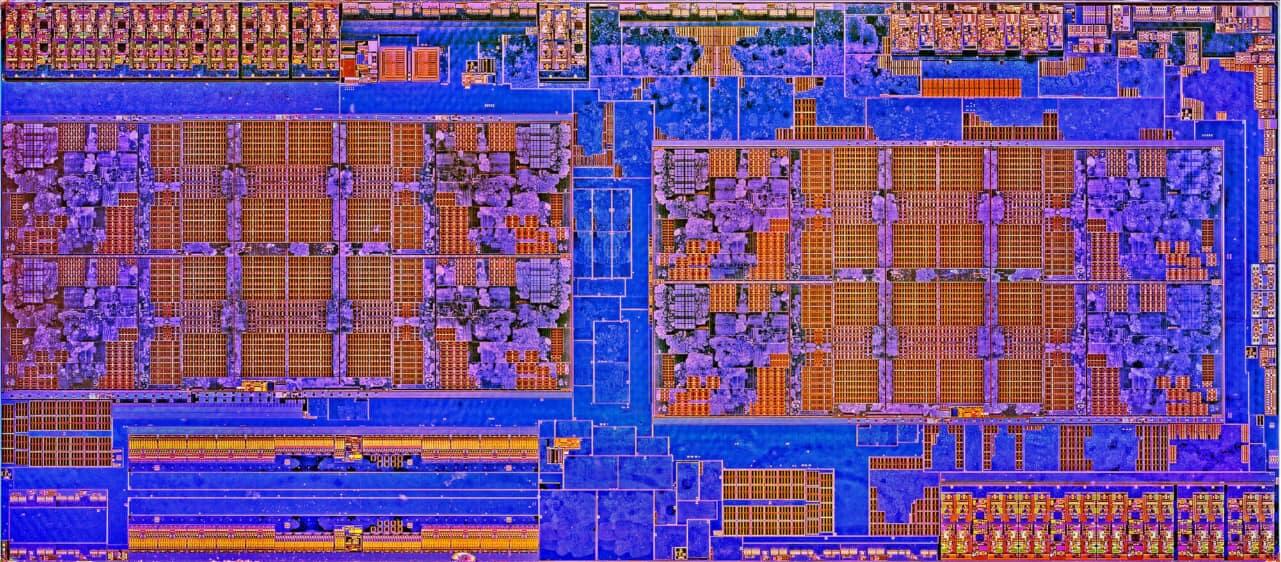

Zen took the best from all previous designs and melded them into a structure that focused on keeping the pipelines equally busy as possible; and to exercise this, required significant improvements to the pipeline and cache systems. The new design dropped the sharing of L1/L2 caches, every bit used in Bulldozer, and each core was now fully independent, with more pipelines, better branch prediction, and greater cache bandwidth.

Reminiscent of the chip powering Microsoft and Sony's consoles, the Ryzen CPU was also a arrangement-on-a-chip

Reminiscent of the chip powering Microsoft and Sony'due south consoles, the Ryzen CPU was too a system-on-a-bit; the just thing it lacked was a GPU (later budget Ryzen models included a GCN processor).

The dice was sectioned into 2 and so-called CPU Complexes (CCX), each of which were 4-core, 8-threads. Also packed into the die was a Southbridge processor -- the CPU offered controllers and links for PCI Express, SATA, and USB. This meant motherboards, in theory, could be made without an SB but nearly all did, just to expand the number of possible device connections.

All of this would be for null if Ryzen couldn't perform, and AMD had a lot to prove in this surface area after years of playing 2d fiddle to Intel. The 1800X and 1700X weren't perfect: as skilful than anything Intel had for professional person applications, but slower in games.

AMD had other cards to play: a month after the first Ryzen CPUs striking the market place, came 6 and 4-core Ryzen 5 models, followed 2 months later by 4-core Ryzen 3 chips. They performed confronting Intel's offerings in the aforementioned style equally their larger brothers, but they were significantly more cost effective.

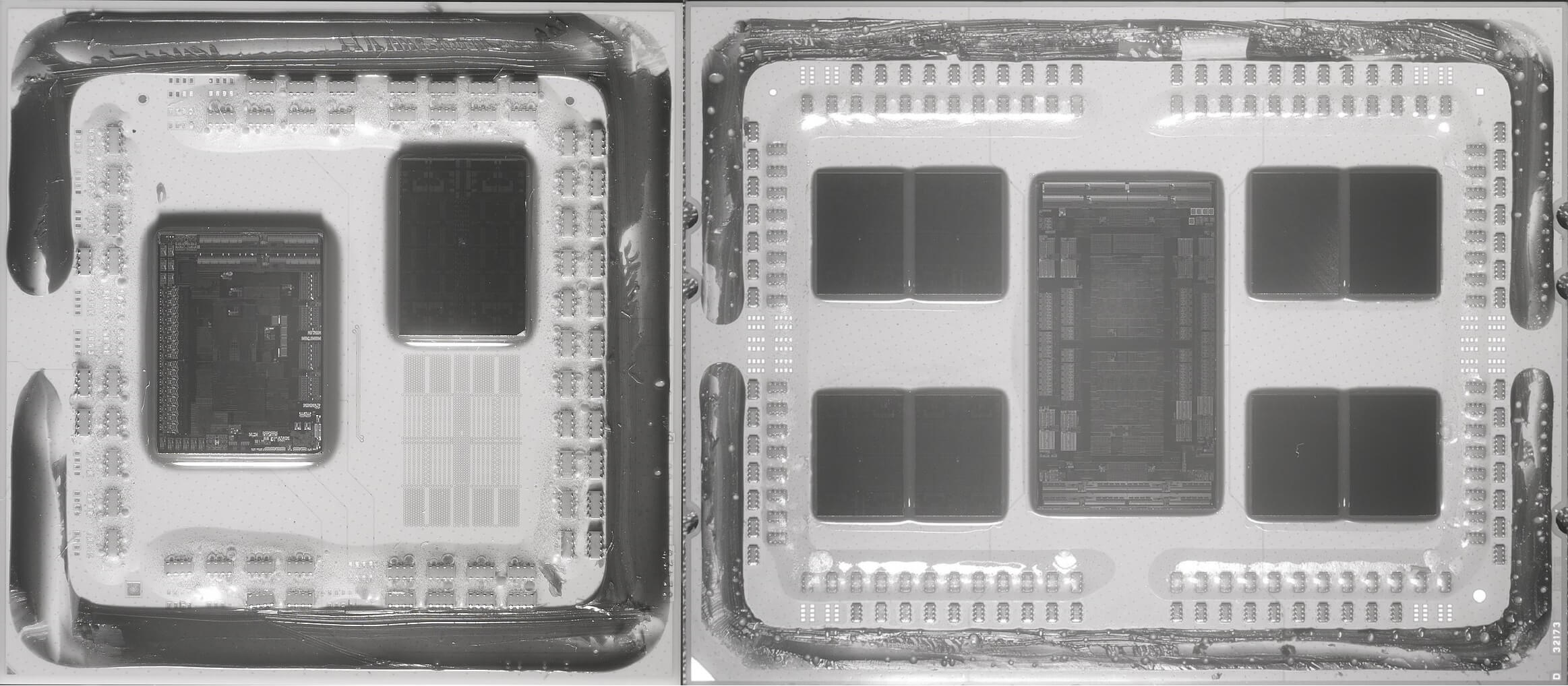

And so came the aces -- the 16-core, 32-thread Ryzen Threadripper 1950X (with an asking price of $one,000) and the 32-core, 64-thread EPYC processor for servers. These behemoths comprised 2 and four Ryzen 7 1800X fries, respectively, in the same parcel, utilizing the new Infinity Material interconnect system to shift data betwixt the fries.

In the space of vi months, AMD showed that they were finer targeting every x86 desktop market possible, with a unmarried, 1-size-fits-all design.

A yr later, the compages was updated to Zen+, which consisted of tweaks in the cache organization and switching from GlobalFoundries' venerable 14LPP process -- a node that was under from Samsung -- to an updated, denser 12LP system. The CPU dies remained the same size, but the new fabrication method allowed the processors to run at college clock speeds.

AMD launched Zen ii: this time the changes were more than significant and the term chiplet became all the rage

Another 12 months after that, in the summer of 2022, AMD launched Zen ii. This time the changes were more significant and the term chiplet became all the rage. Rather than following a monolithic structure, where every office of the CPU is in the same piece of silicon (which Zen and Zen+ do), the engineers separated in the Core Complexes from the interconnect system.

The former were built by TSMC, using their N7 process, becoming full dies in their own right -- hence the proper name, Core Complex Dice (CCD). The input/output structure was fabricated by GlobalFoundries, with desktop Ryzen models using a 12LP chip, and Threadripper & EPYC sporting larger 14 nm versions.

The chiplet design will exist retained and refined for Zen 3, currently penned for release tardily in 2022. We're non likely to come across the CCDs break Zen ii'southward eight-core, 16-thread layout, instead it'll be a similar comeback equally seen with Zen+ (i.e. enshroud, power efficiency, and clock speed improvements).

It'southward worth taking stock with what AMD achieved with Zen. In the infinite of viii years, the architecture went from a bare sheet of paper to a comprehensive portfolio of products, containing $99 four-cadre, 8-thread budget offerings through to $iv,000+ 64-cadre, 128-thread server CPUs.

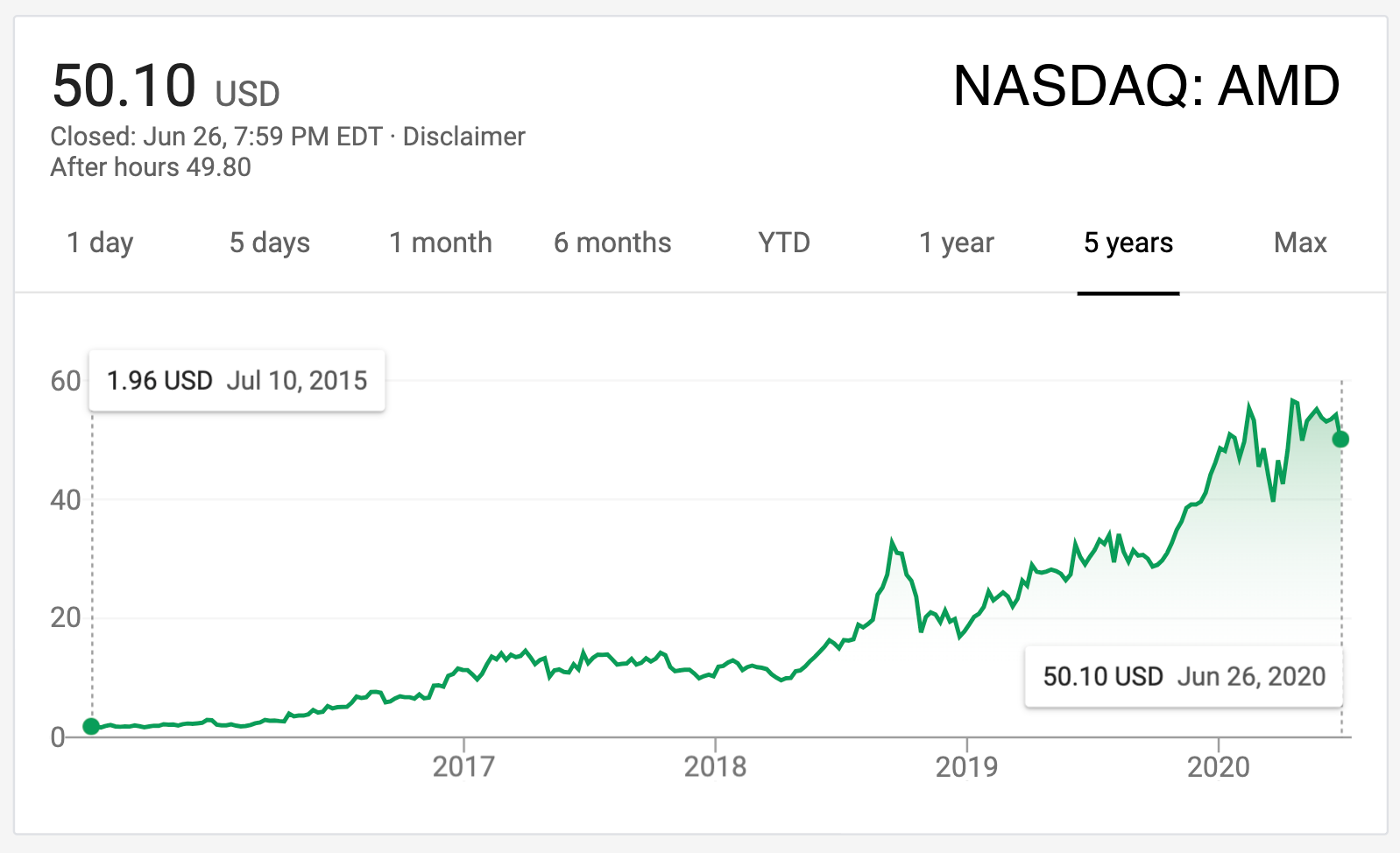

AMD'due south finances have inverse dramatically besides: from losses and debts running into the billions, AMD is at present on track to clear its loans and mail service an operating income in excess of $600 million, within the side by side yr. While Zen may not be the sole factor in the visitor's financial revival, it has helped enormously.

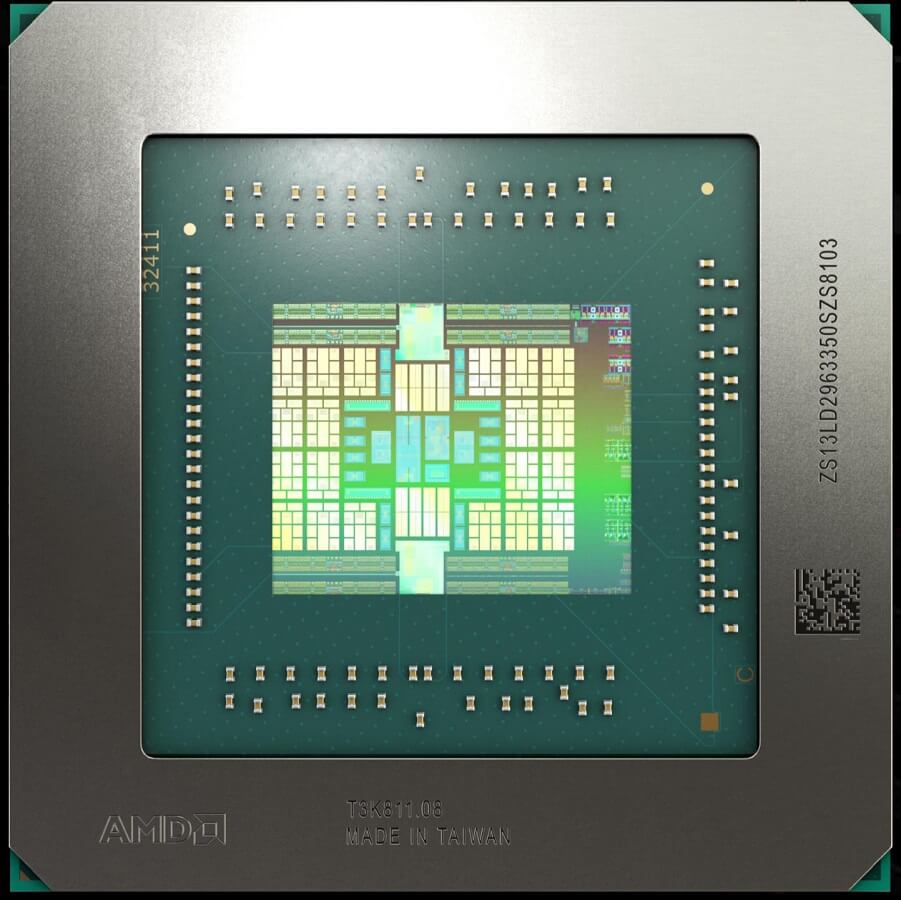

AMD's graphics division has seen similar changes in fortune -- in 2022, the section was given full independence, as the Radeon Technologies Group (RTG). The well-nigh significant development from their engineers came in the form of RDNA, a significant reworking of GCN. Changes to the cache structure, along with adjustments to the size and group of the compute units, shifted the focus of the architecture straight towards gaming.

The first models to apply this new compages, the Radeon RX 5700 series, demonstrated the design's serious potential. This was non lost on Microsoft and Sony, every bit they both selected Zen 2 and the updated RDNA ii, to power their forthcoming new Xbox and PlayStation v consoles.

AMD is quantifiably back to where it was in the Athlon 64 days in terms of architecture evolution and technological innovation. They rose to the top, cruel from grace, and like a beast from mythology, created their own rebirth from the ashes.

Although the Radeon Group hasn't enjoyed the same level of success as the CPU division, and their graphics cards are perhaps still seen every bit the "value option," AMD is quantifiably back to where it was in the Athlon 64 days in terms of architecture development and technological innovation. They rose to the top, cruel from grace, and like a beast from mythology, created their ain rebirth from the ashes.

Looking ahead with caution

Information technology's perfectly suitable to ask a uncomplicated question nigh AMD: could they return to the dark days of dismal products and no money?

Even if 2022 proves to be an splendid twelvemonth for AMD and positive Q1 financial results testify a 40% improvement to the previous year, $9.4 billion of revenue still puts them backside Nvidia ($10.7 billion in 2022) and low-cal years away from Intel ($72 billion). The latter has a much larger product portfolio, of course, and its own foundries, but Nvidia'due south income is reliant almost entirely on graphics cards.

It'south clear that both revenue and operating income need to grow, in order to fully stabilize AMD's future -- and then how could this exist achieved? The majority of their income is from what they call the Computing and Graphics segment, i.e. Ryzen and Radeon sales. This volition undoubtedly proceed to improve, as Ryzen is very competitive and the RDNA 2 architecture will provide a common platform for games that work as well on PCs as they do on next-generation consoles.

Intel's latest desktop CPUs hold an ever decreasing pb in gaming. They as well lack the latitude of features that Zen 3 will offer. Nvidia holds the GPU performance crown, but faces stiff competition in the mid-range sector from Radeons. It's maybe nothing more than a coincidence, but fifty-fifty though RTG is a fully contained division of AMD, its revenue and operating income are grouped with the CPU sector -- this suggests that their graphics cards, while pop, exercise non sell in the same quantities as their Ryzen products practise.

Possibly a more pressing issue for AMD is that their Enterprise, Embedded and Semi-Custom segment accounted for only under 20% of the Q1 2022 revenue, and ran at an operating loss. This may be explained by the fact that current-gen Xbox and PlayStation sales have stagnated, in light of the success of Nintendo'south Switch and forthcoming new models from Microsoft and Sony. Intel has besides utterly dominated the enterprise marketplace and nobody running a multi-1000000 dollar datacenter is going to throw it all out, simply because an astonishing new CPU is available.

But this could change over the next couple of years, partly through the new game consoles, but also from an unexpected alliance. Nvidia, of all companies, picked AMD over Intel equally the choice of CPU for their new deep learning/AI compute clusters, the DGX 100. The reason is straightforward: the EPYC processor has more cores and retention channels, and faster PCI Express lanes than anything Intel has to offer.

If Nvidia is happy to employ AMD'due south products, others will certainly follow adjust. AMD volition have to keep climbing a steep mount, but today it'd appear they have the right tools for the chore. Equally TSMC continues to tweak and refine its N7 procedure node, all AMD chips made using the procedure are going to be incrementally better, too.

Looking frontwards, there are a few areas within AMD that could apply genuine improvement. One such area is marketing. The 'Intel Inside' catchphrase and jingle take been ubiquitous for over 30 years, and while AMD spends some coin promoting Ryzen, ultimately they demand makers such as Dell, HP, and Lenovo to sell units sporting their processors in the same light and specifications, every bit they do with Intel's.

On the software side, in that location'due south been plenty of work on applications that heighten users' experience, such as Ryzen Master, merely information technology was just recently that Radeon drivers were having widespread problems. Gaming drivers tin can be hugely complex, simply the quality of them can make or break the reputation of a piece of hardware.

AMD is currently in the strongest position that they've ever been in its 51-year history. With the ambitious Zen project showing no signs of hit whatsoever limits presently, the company'southward phoenix-like rebirth has been a tremendous success. They're not at the summit of the mountain though, and perhaps for the better. It'south said that history always repeats itself, but allow's hope that this doesn't come up to pass. A salubrious and competitive AMD, fully able to see Intel and Nvidia caput-on, but brings benefits to users.

What are your thoughts on AMD and its trials and tribulations -- did you ain a K6 chip, or perhaps an Athlon? What'southward your favorite Radeon graphics carte? Which Zen-based processor are y'all most impressed by? Share your comments in the section below.

Source: https://www.techspot.com/article/2043-amd-rise-fall-revival-history/

Posted by: nowellacurnhooks.blogspot.com

0 Response to "The Rise, Fall and Revival of AMD"

Post a Comment